How do you solve a problem like ORMs?

To be honest, I didn't think databases and ORMs would be the first thing I would talk about when I return to my blog. There are several topical issues and debates that I would want to wade into. But the ubiquitous nature of databases and this problem is what forces me to want to express my thoughts on this topics. So here they are.

Database-driven applications dominate the landscape in terms of software solutions in most industries. If you find yourself designing a software solution for some industry problem, chances are you will consider using a relational database as part of that solution.

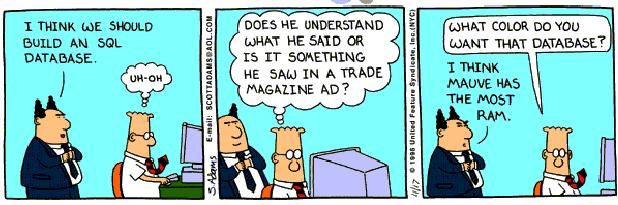

Programming languages, ORM frameworks and relational databases are all fairly mature technologies. In spite of this, it is staggering to see how every ORM library is still full of trade-offs. Most developers are all too familiar with the slippery slope, that moment of inflection when the amount of effort to maintain and re-use your ORM library exceeds the output. To call them the Vietnam of computer science is, albeit jaded, still very accurate.

This article does not intend to regurgitate the intricacies of this impedance mismatch. People more capable than myself have sufficiently described the problem. Instead, I wish to examine the trade-offs from the point of view of my preferred strategy, which is three-fold:

- Using a database-first approach, i.e. your database schema as the golden source, generate entities in your preferred OO language in a concrete-class-per-table style. Generate metadata mapping to bridge the two models.

- Hand-craft your SQL.

- Leverage third-party solutions for caching, change-tracking, identifier generation, etc., when the situation warrants it.

Using this approach by default as a starting point, I believe the overall problem is sufficiently addressed to allow us to carry on focussing on essential tasks, such as solving the original problem at hand.

So, let's begin.

Entity modelling (identities, hierarchies and associations)

Let's start with the hardest problem first, that of the insufficiency of either modelling paradigm (OO or relational database design) to accurately/elegantly represent the other. Most DDD and OO purists will refer to this as the most irksome issue within this space.

Identity

In terms of mapping identity, it does feel like we're forcing a square peg into a round hole. Candidate keys within relvars and object identity within OO languages are two separate constructs forged in two separate paradigms. To me, trying to map between those two concepts is highly illogical. We don't attempt to do that when converting any other model into our OO design. For instance, if my bank account id is xyz, do I ever force my object reference , i.e. the actual object pointer to somehow hold this information rather than an address? If not, why are we so keen on this when mapping our relational model?

The concept of candidate keys within relational systems is fairly illuminating when thinking about this. A candidate key is an irreducible subset of attributes in a relvar that is unique, i.e. no two tuples in that relvar have the same values for that subset of attributes. This can easily be understood as the identifier of that tuple within that relvar, or of that object within that collection.

So, in that sense, the concept of comparing identifiers is distinct from typical language comparison constructs such as '==' operator or the .Equals method). It is up to the implementer to decide whether to reuse the identifier comparison for equality. That is simply a matter of judgement. It is dependent on the problem you are trying to solve. Conversely, the set of all attributes of R has the uniqueness property. Similarly two objects could be considered equal if all member fields are equal. This is simply dependent on the problem you are trying to solve.

Just have a way of identifying candidate keys (Id or Ids) within your objects. Simply generate a new method, such as HasSameIdentity which compares two objects based on their candidate keys. Or generate comparators. It is that simple. This provides the flexibility to override your .Equals (and .Hashcode) methods to simply rely on the primary key. The implication is that your application can live with the assumption that if the primary key matches, the objects are equal. Or indeed, modify those methods to compare all fields within that object. Nevertheless, this starting point offers most flexibility without having to give up on much. This issue is closely linked to change-tracking, something we'll be touching upon a little bit later (TL;DR -- just use third-party libraries for change tracking).

This is pretty much what typical ORM solutions do. They allow you, the developer, to declare what field/s must be considered to be identifiers.

Then comes the question of identifier generation. Who must own this? Should the creation of a new object, automatically generate a new value for that identifier? And how do you sync those values across from your client application down to your database? What if your client application is not the sole creator of such records within your table?

Many approaches exist: you could assign identity on persistence within the db. Or you may need that identity up front, in which case you use database functions/procedures to increment that identity within the database, ahead of creating that record within the table. Or you might need hi/lo generators. Again, that depends on the problem at hand.

Object hierarchies

Next up is inheritance and polymorphism, which don't really find equivalence in the relational world. Typically, ORMs use discriminator fields to map between a single table and a hierarchy of objects. Again, we avoid this altogether by generating a concrete class per table. I'm not suggesting we give up on the sophisticated modelling techniques that OO languages provide. Polymorphism is useful. That said, this platform allows us to define more sophisticated class hierarchies which use the generated entities as DTOs contained within. Access to field values can then simply be deferred to the relevant field on the constituent DTO. By favouring composition in this way over inheritance, a well-established tenet of OO design, we are free to create class hierarchies and to wield them with the same dexterity as before.

And what of performance? All the additional object creation is expensive. Yes, fair enough, but only so in about 1% of applications that are striving for that extraordinary performance where this amount of object creation matters. For most of us, we will barely notice.

Relationships

Moving on to associations, which is definitely a hard nut to crack. Typically, associations necessarily need manual intervention. While relational design allows us to model one-to-many and many-to-many relationships, the expected OO representations are sufficiently divergent to warrant that additional step. One can usually traverse both ways on that reference in the db. Modelling such traversal needs to be implemented in the OO world. Simply because there isn't a convention that would suffice all possible scenarios. If we have parent-child relationships in the db, when modelling it as entities, should the parent have a collection of children or should each child hold a reference to the parent object? Or both?

Again, by hand-crafting our entities and repository implementations which are respectively composed of the generated DTOs and DAOs, we can model such associations quite comfortably. Again, the generated code serves as a basis for a more elegant, hand-crafted entity model.

How much data is too much data?

Lazy loading vs eager loading

Having addressed our modelling problems, let us now turn our attention to the amount of data coming across the wire. How much data is too much data? If we have models that contain several columns, do we need to fetch all of them at once? And what if that field maps to a collection of child elements? Should they be loaded when the parent is loaded? Or should they be loaded when accessed? These again are some of the things that ORMs typically strive to provide seamless solutions for. From a programmer's perspective, all she needs to do is use that field; let the ORM hydrate it.

The slippery slope resurfaces here: When using lazy loading, after enough iterations, you invariably hit the N+1 issue, i.e. the access pattern results in the ORM running a query to populate each child in that collection. To be fair, most ORMs offer countermeasures, which are latent at first: namely, batching the population of child elements, or indeed eager-loading the children if they are more likely to be accessed than not.

This is what Ted Neward refers to in his famous article as enslaving one model to the other. This sort of initially fruitful, but ultimately troublesome approach within ORMs is borne from the desire to deal entirely in 'objects' and not in 'SQL'.

I suggest embracing the duality. Hearing ORM experts suggest developers be mindful of the SQL their ORMs generate, makes me think that the pursuit of a singular approach is fool's gold, really.

Caching

The next approach would be to use some sort of in-built caching layer to alleviate some of these problems. If an object references another object which does not change much, the argument is that such objects could be cached to reduce activity across the wire. Most ORM frameworks offer some sort of in-built caching solution for this. I'm not going to cover it in detail here, this article is getting way too long already, but in short, again, if you are fully leveraging the facilities available within your RDBMS, you can then easily use third-party caching solutions to plug the gaps, if any.

I wholeheartedly agree with the principle of loading only what you need. That makes perfect sense, whatever approach you take. With our solution, we have the benefit of crafting our SQL as we deem necessary and appropriate in that particular scenario. Eager load or lazy load consciously and at will!

What have we gained?

One could, very reasonably, argue that since this approach does not solve all the problems anyway and a lot of hand-crafting is still necessary, one might as well adopt ORMs for 90% of their use cases and employ alternatives for the remaining 10% where ORMs might not be a good fit.

While not entirely disagreeing with that approach (it is definitely a matter of choice), I would posit that none of the features that ORMs purport to provide are mandatorily needed in every solution. You do not need complex hierarchies, associations, lazy loading, change tracking and caching, all at once, in every single database-driven application. Nor are the problems that ORMs sometimes give rise to, if not some of the above, then things like generating sub-optimal SQL that leads to performance issues.

There is no silver bullet. While I can't promise even one order-of-magnitude improvement in productivity or reliability with this solution, this approach is definitely simpler. Most of the features available within ORMs aren't absolutely necessary in all scenarios. Avoid the ORM quagmire. Use this approach as a starting point, focus on the essence of the problem and iteratively grow your application.

ORMs = YAGNI

Phew, that's me done for now. Over the course of the next few weeks and months, I will be following up with tangible examples and solutions to demonstrate why this works. Stay tuned!